Paul Finch, COO, Kao Data, tells us why the data centre industry can no longer be reliant on ‘feeling’ its way forward to achieving reduced energy use. He says there are now standard processes that provide scientific support to more efficient and effective data centre businesses.

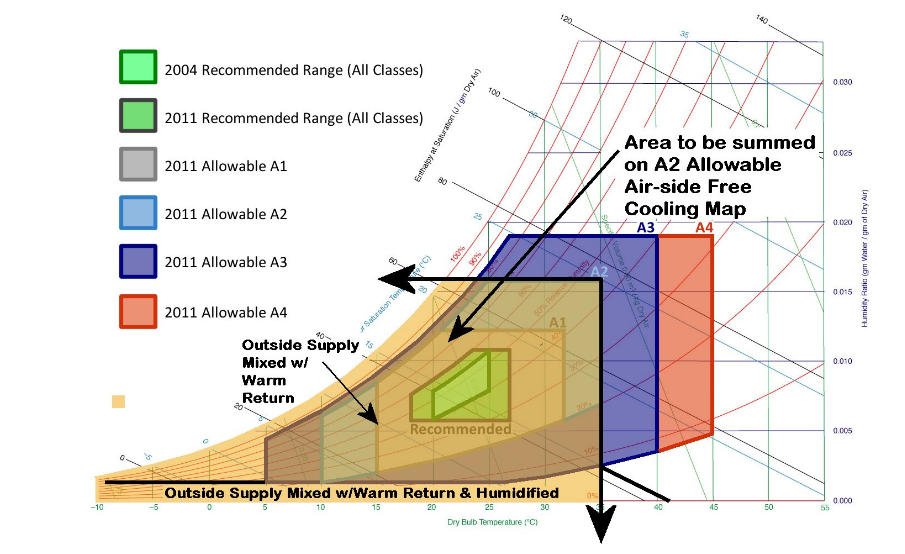

Data centres live or die based on their up-time and availability therefore equipment reliability is paramount. Working with ASHRAE TC9.9 guidelines, servers, storage and networking manufacturers have for some time been engineering their devices and equipment to perform across the full ‘Recommendation’ range. Extending into the wider ‘Allowable’ environmental range, allows IT equipment to operate more efficiency, and gains from two outcomes:

- The data centre uses less absorbed power dedicated to cooling and reduces energy costs

- As the air inlet temperature increases so does the free-cooling opportunity and when applied innovatively with appropriate cooling technologies can eliminate the need for mechanical refrigeration.

IT equipment manufacturers in specific applications even allow for specified time excursions to environmental temperatures up to 45oC, without affecting the manufacturer’s warranty. In real-life environments, the primary factor determining system failure rate is component temperature. Equipment improvements now in place provide high reliability and a reduction in the risk of device thermal shutdown, which has caused major data centre outages during the past few years.

Delivering lower server inlet temperatures usually requires large, complex, expensive equipment and cooling infrastructure. The more equipment on-site, the greater the overall complexity, the lower the reliability is likely to be. All equipment requires maintenance and servicing, and it is sensible to assume at some point during the life-cycle it will fail.

Furthermore, energy is the biggest Op-EX for a data centre and mechanical cooling represents the largest proportion of energy use, beyond the IT load. Therefore, this represents the greatest opportunity for energy and cost savings.

Correspondingly, reducing the energy used within the data centre infrastructure, effectively releases that capacity for more IT utilisation.

Reducing complexity is a critical approach to efficiency and sustainability, achieving a ‘flat PUE response’ from 25% to 100% load drives up availability and uptime. Demonstrating that low PUE, down to 1.2 – 1.0 is achievable, is not simply a marketing tool, but a fiscal and ecological responsibility for data centre operators.

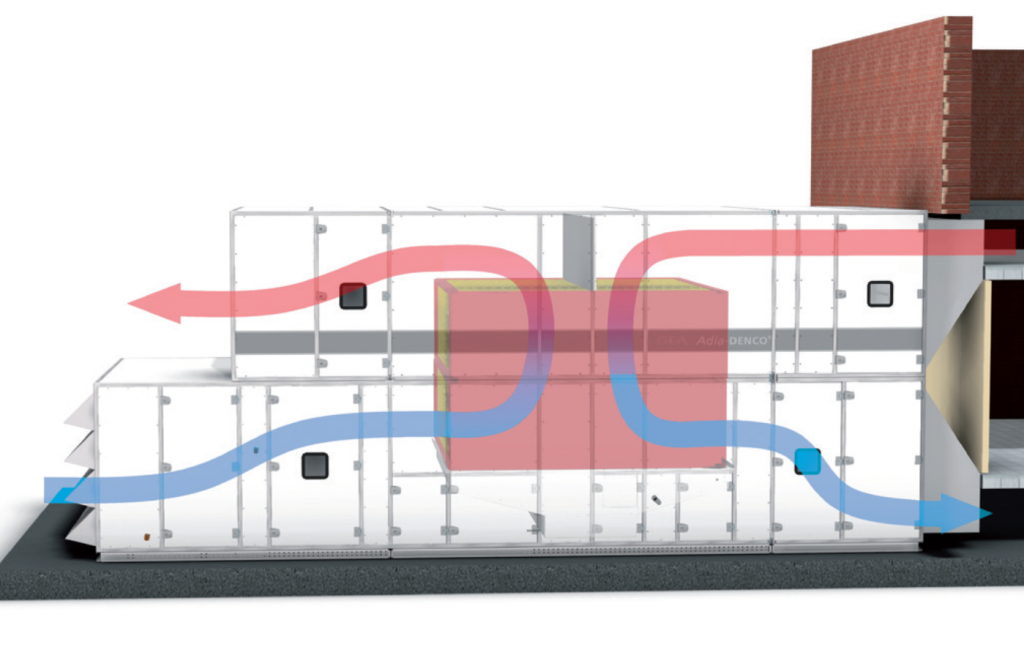

Indirect Evaporative Cooling – IEC

In comparison to traditional chilled water or refrigerant based systems, IEC is relatively uncomplicated, although it still requires mechanical ventilation in the form of fans and heat exchanger with few moving parts.

Air heat-rejection occurs when the return warm air is passively cooled through contact with a plate that has been evaporatively cooled on an adjacent atmospheric side.

A benefit is that no moisture is added to the supply side air stream as it returns to the data hall, maintaining the humidity within the hall. This ensures the precise supply air inlet conditions can be delivered to support the IT load.

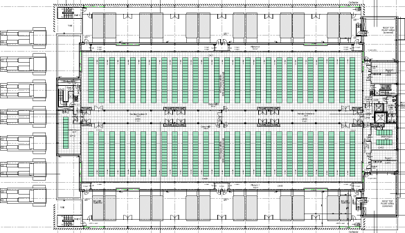

However, air movement, even within a confined space can be chaotic. Many data centre designs now incorporate the use of CFD (computational fluid dynamics) to model the air movement around the IT space. The modelling is complex but greatly reduces risk as it provides a detailed theoretical model on how the technical space will perform and react to dynamic loads.

Modelling also assists in rack layout and our results demonstrated that hot aisle containment (HAC) systems, which stop hot and cold air mixing in and around the cabinets, offered the most efficient design to allow controlled air-flow circulation around the IT Hall. HAC draws cool air into the front of the contained cabinets and through the IT equipment and then expels hot air up and out in to the ceiling space to return to the IEC system where the heat is rejected.

IEC, when used effectively, allows data centre designers to match the environmental conditions in the data halls to the free-cooling opportunity available within their specific geography. This ensures that evaporative cooling is effective for far longer throughout the year.

The latest IT equipment technologies (servers, storage and networking equipment) are developed to operate within the parameters characterised in non-mechanical cooling systems, such as IEC, even with maximum predicted annual temperature excursions. In many locations and business strategies the massive capital cost of installing a traditional chilled water and refrigerant based systems can be avoided.

Conclusion

The data centre market has become increasingly competitive, as the industry continues to expand into new regions and that growth consumes increasing amounts of energy. Economic, public, as well as political and social pressures demand more efficiency from the industry and energy is a large component in the data centre cost structure and a key focus from external policy makers.

Developments in cooling technology and the correct application of techniques offers a transparent path to designing and implementing non-mechanical cooling strategies. These reduce complexity, increase reliability and maximise the operational hours of minimal cost cooling.

The correct application of the ASHRAE environmental classes and broader thermal guidelines will drive PUE lower, not only at peak load, but consistently deliver sub 1.2 PUE resulting in a far lesser impact from a sustainability perspective.

Our industry is no longer reliant on feeling our way forward to reduced energy use. We have standard processes that provide scientific support to more efficient and effective data centre businesses.

Click below to share this article